An OpenAI neural network has managed to perfectly learn how to play Minecraft through YouTube videos, and it’s pretty good at it.

The OpenAI model was able to go beyond basic crafting and survival, and actually perform many of the same complex tasks that a human Minecraft player would do. In its blog post, OpenAI shows a video of its model swimming, hunting, and cooking animals.

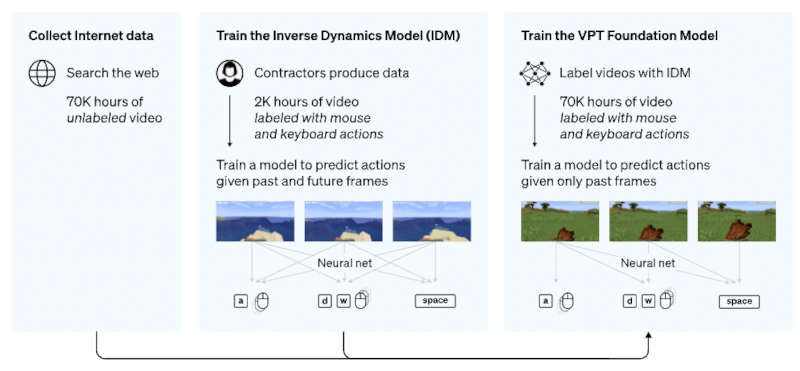

The researchers used a technique called Video PreTraining (VPT). This involves collecting sample data from actual gameplay videos — such as button presses and mouse movements. Next, create an algorithm that logs these actions.

Initially, a 2000-hour video database was collected, after which OpenAI researchers manually marked each action by associating it with certain keyboard buttons or mouse interactions. Thus, the neural network understands that in order to jump onto the block, it is necessary to “press” the spacebar. As a result, AI managed to teach how to save resources, craft items and perform other actions available in Minecraft.

The researchers then trained an “Inverse Dynamics Model” (IDM) to predict the sequence of actions that are performed in the gameplay videos. Ultimately, the trained IDM was shown 70,000 hours of online video. The model was able to copy actions from the videos and was able to learn how to build a diamond pickaxe, a skill that would take a human player around 20 minutes and 24,000 actions to accomplish.

The potential of artificial intelligence never ceases to amaze us. Recently we saw AI system like DALL-E, Imagen and Speech2Face, we witness how US Air Force military plane has managed to fly using only an AI as co-pilot.